Google DeepMind launched Gemini Robotics-ER 1.6 on April 14, 2026. The new model advances how robots reason about physical environments. It targets key challenges in spatial understanding, task planning, and autonomous decision-making.

Gemini Robotics-ER 1.6 enhances robot autonomy through advanced AI reasoning and spatial understanding. [Google DeepMind]

The release marks a significant step in the field of embodied AI. Robots equipped with the model can now process complex visual data and act on it without constant human input. Developers can access the model via the Gemini API and Google AI Studio, starting today.

What Is Gemini Robotics-ER 1.6 and How Does It Work

Gemini Robotics-ER 1.6 functions as the high-level reasoning layer for robotic systems. It processes visual input from multiple camera feeds and translates that data into actionable decisions. The model connects to external tools, including Google Search and vision-language-action (VLA) models.

Developers can also define custom third-party functions that the model can call during operation. This makes it adaptable across a range of robotic applications.

Rather than relying on a single fixed pipeline, the model coordinates multiple AI systems to complete tasks.

Google DeepMind researchers Laura Graesser and Peng Xu led the model’s development. They report that ER 1.6 outperforms both its predecessor, ER 1.5, and Gemini 3.0 Flash on spatial and physical reasoning benchmarks. Key improvements appear in pointing accuracy, object counting, and success detection.

Spatial Reasoning and Multi-View Understanding Get Major Upgrades

One of the most notable improvements in ER 1.6 is its spatial reasoning capability. The model now processes multiple camera streams at once. It understands how different viewpoints combine into one coherent picture, even in dynamic or partially obstructed environments.

Most modern robotic setups use overhead cameras alongside wrist-mounted feeds. A robot must correlate these viewpoints in real time to perform reliably. ER 1.6 advances this multi-view reasoning, making it more consistent across changing conditions.

Multi-view reasoning allows robots to combine perspectives for accurate task execution. [Robotiq]

This improvement also benefits task success detection. Knowing when a task has been completed correctly is as important as performing it. ER 1.6 uses visual reasoning to determine whether a task succeeded and whether the robot should retry or move forward.

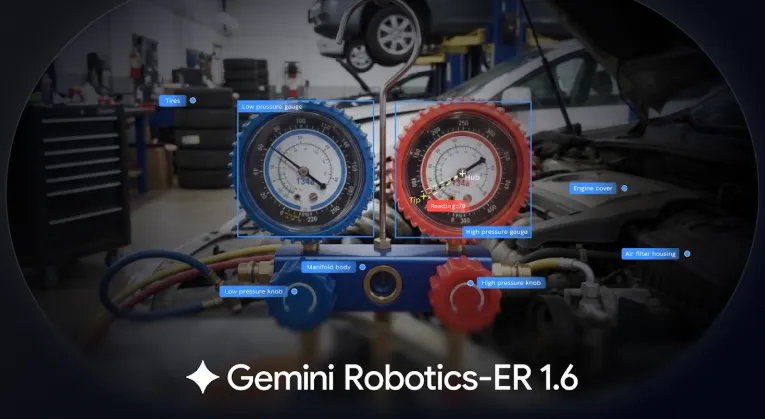

New Instrument Reading Capability Targets Industrial Settings

Google DeepMind introduced instrument reading as a new capability in ER 1.6. The model can now interpret analog gauges, sight glasses, and digital readouts. These instruments appear frequently in manufacturing plants, refineries, and industrial facilities.

Reading a gauge involves more than identifying a needle. The model must detect tick marks, read unit labels, account for camera distortion, and in some cases combine readings from multiple needles. ER 1.6 handles all of these steps through a combination of visual perception and world knowledge.

This capability came out of DeepMind’s ongoing collaboration with Boston Dynamics. The feature addresses real industrial use cases where robots need to monitor equipment without constant human supervision.

It signals that practical deployment needs, not just benchmark scores, shaped the model’s development.

Boston Dynamics Integrates AI Robotics Model Into Spot Robot Platform

Boston Dynamics announced a partnership with Google DeepMind and Google Cloud on April 14, 2026. The companies integrated Gemini and Gemini Robotics-ER 1.6 into Boston Dynamics’ Orbit software platform.

The integration specifically targets the AI Visual Inspection (AIVI) and AIVI-Learning systems that power the Spot robot.

Spot now uses the model to perform complex inspection tasks autonomously. These include monitoring equipment like gauges and conveyor systems, detecting hazards such as spills, and conducting safety audits. The robot can also answer questions about a facility based on what its cameras capture.

Boston Dynamics’ Spot robot uses Gemini Robotics-ER 1.6 for autonomous inspection and hazard detection. [Boston Dynamics]

“Advances like Gemini Robotics ER 1.6 mark an important step toward robots that can better understand and operate in the physical world. Capabilities like instrument reading and more reliable task reasoning will enable Spot to see, understand, and react to real-world challenges completely autonomously,”said Marco da Silva, Vice President and General Manager of Spot at Boston Dynamics.

Transparent AI Reasoning Addresses Industrial Accountability Concerns

Boston Dynamics highlighted a feature called transparent reasoning as part of the integration. The system shows users how the AI reaches its conclusions during inspections. This feature addresses a common concern in industrial settings: accountability for automated decisions.

When a robot makes a decision in a facility, operators need to understand the basis for that decision. Transparent reasoning makes the AI’s logic visible and auditable. This builds trust and supports compliance in regulated industries.

The company also noted that AI models are updated through the cloud without interrupting robot operations. Boston Dynamics describes this as zero-downtime upgrades. It means facilities can adopt new capabilities without taking robots offline during active shifts.

Gemini Robotics-ER 1.6 Sets New Safety Standards for Autonomous Robots

Google DeepMind describes ER 1.6 as its safest robotics model to date. The model shows stronger compliance with safety policies on adversarial spatial reasoning tasks compared to previous versions. This matters because robots in industrial and commercial environments interact with unpredictable conditions.

Carolina Parada, Head of Robotics at Google DeepMind, explained the benchmark the team uses to measure understanding.

According to her, the system should answer the way a human would. This human-centered standard guides how the team evaluates the model’s reasoning quality.

The emphasis on safety reflects broader concerns about deploying autonomous AI in high-stakes environments. Robots that misidentify hazards or make incorrect decisions can cause costly or dangerous outcomes. DeepMind’s safety improvements aim to reduce these risks at scale.

Broader Industry Trend: Combining Physical Robots With Large AI Models

The release of Gemini Robotics-ER 1.6 reflects a wider shift in the robotics industry. Companies increasingly pair physical machines with large-scale AI models to expand what those machines can do. The goal is to move beyond pre-programmed behaviors toward systems that interpret environments and learn over time.

Agile Robots, which operates more than 20,000 robotic units across factories in China, Europe, and the United States, recently partnered with Google DeepMind.

The two companies exchange real-world deployment data to improve model performance. Target applications include electronics manufacturing, electric vehicle production, and data center maintenance.

AI-powered robots are transforming industries with smarter automation and real-world adaptability. [LinkedIn]

The industrial robotics market is currently valued at approximately $200 billion. Labour shortages and supply chain pressures drive demand for autonomous systems. DeepMind’s strategy of building AI that reasons reliably in real environments positions it within a competitive field that includes Figure AI and state-backed robotics programs in China.

Also Read: OpenAI Acquires AI Personal Finance Startup Hiro in Strategic Acquihire

Developer Access and What Comes Next for AI-Powered Robotics

Developers can access Gemini Robotics-ER 1.6 through the Gemini API and Google AI Studio starting April 14, 2026. Google DeepMind also published a Colab notebook with configuration examples and prompting guides. The notebook gives development teams a practical starting point for building autonomous systems.

The model’s availability through standard API channels lowers the barrier for teams outside of large robotics companies.

Startups and research institutions can now integrate high-level embodied reasoning into their own systems. This opens development across sectors, including healthcare, logistics, and retail automation.

Whether Gemini Robotics-ER 1.6 delivers consistent results across diverse and unpredictable environments remains to be seen.

Real-world conditions, including varying lighting, unexpected objects, and complex instructions, test AI systems in ways that controlled benchmarks do not. Field performance will determine how quickly the technology gains traction in commercial deployment.

FAQS

Q1: What is Gemini Robotics-ER 1.6?

A: Gemini Robotics-ER 1.6 is an advanced AI model developed by Google DeepMind to improve robot autonomy through better spatial reasoning, task planning, and decision-making.

Q2: What makes Gemini Robotics-ER 1.6 different from earlier versions?

A: It offers improved multi-view spatial understanding, enhanced task success detection, and new capabilities like instrument reading, outperforming previous versions like ER 1.5.

Q3: How does the model help robots operate autonomously?

A: The model processes visual data from multiple cameras, interprets environments, and makes decisions without constant human input, enabling more independent robot actions.

Q4: What industries can benefit from this technology?

A: Industries such as manufacturing, logistics, healthcare, and energy can benefit, especially for tasks like inspection, monitoring, and automation.

Q5: What is the role of Boston Dynamics in this development?

A: Boston Dynamics partnered with Google DeepMind to integrate the model into its Spot robot for autonomous inspection and safety tasks.

Q6: Can developers access Gemini Robotics-ER 1.6?

A: Yes, developers can access the model through the Gemini API and Google AI Studio, allowing them to build and test autonomous robotic applications.

Q7: What is “transparent reasoning” in this context?

A: Transparent reasoning allows users to see how the AI reaches decisions, improving trust, accountability, and compliance in industrial environments.

Q8: Is Gemini Robotics-ER 1.6 safe for real-world use?

A: Google DeepMind states it is their safest robotics model yet, with improved compliance in safety benchmarks and better handling of real-world uncertainties.

Disclaimer

This article is published by Colitco for informational purposes only. It is based on publicly available information and official statements from Google DeepMind and related companies. It does not constitute financial, investment, or technological advice. Readers should conduct their own research before making decisions based on this content. Colitco does not guarantee the accuracy or completeness of the information and is not liable for any outcomes resulting from its use.

Sources

https://www.mobileroboticsinsider.com/p/agile-robots-teams-up-with-google

https://blog.google/innovation-and-ai/models-and-research/google-deepmind/gemini-robotics-er-1-6/

https://spectrum.ieee.org/boston-dynamics-spot-google-deepmind

https://deepmind.google/blog/gemini-robotics-er-1-6/

Tags: Gemini Robotics-ER 1.6 Last modified: April 16, 2026