The AI chip race is heating up, and analysts are placing their bets firmly on one side.

As artificial intelligence infrastructure spending continues to surge across global markets, the Nvidia vs Micron comparison has become one of the most hotly debated conversations in tech investing circles. Both companies sit at the heart of the AI boom. But when analysts weigh growth trajectory, market dominance, and long-term upside, one name keeps coming out on top: Nvidia.

The Two Giants Behind the AI Build-Out

What Each Company Actually Does

Nvidia and Micron both play critical roles in building the infrastructure that powers modern AI — but they do very different things.

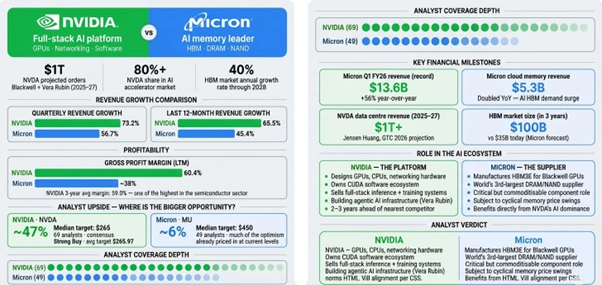

Nvidia designs and supplies graphics processing units (GPUs), central processing units (CPUs), and high-performance networking hardware. Its GPUs dominate the AI training and inference market, commanding more than 80% market share in AI accelerators. That kind of market control is extraordinarily rare in the semiconductor industry.

Micron, on the other hand, manufactures memory and storage chips, including high-bandwidth memory (HBM), that store and move the enormous volumes of data AI workloads demand. It ranks as the world’s third-largest supplier of DRAM and NAND flash products and has been gaining market share as AI memory demand accelerates.

Both companies are essential. But they are not equal bets.

Figure 1: NVIDIA dominates the AI stack; Micron provides memory. Analysts project 47% upside for NVIDIA versus 6% for Micron.

What the Numbers Actually Show

Nvidia’s Financial Lead Is Difficult to Overstate

Nvidia’s quarterly revenue growth reached 73.2%, compared with Micron’s 56.7%. Over the last twelve months, Nvidia’s revenue grew 65.5%, ahead of Micron’s 45.4%. Nvidia also leads on profitability — its last-twelve-months margin sits at 60.4%, with a three-year average of 59.0%.

Those figures explain why so many analysts still favour Nvidia in a direct Nvidia vs Micron comparison, even as Micron’s growth story attracts genuine excitement.

Among 69 analysts covering Nvidia, the median price target sits at $265 per share, implying roughly 47% upside from current levels. Among 49 analysts covering Micron, the median target is $450 per share, implying just 6% upside from its current price of around $426.

That gap in projected upside is striking. Micron has already priced in a lot of optimism. Nvidia, by contrast, still has significant room to run, at least according to the consensus on Wall Street.

The 38 analysts that cover Nvidia stock carry a consensus rating of “Strong Buy” and an average price target of $265.97, which forecasts a roughly 49% increase in the stock price over the next year.

Why Analysts See More Upside in Nvidia Right Now

GTC 2026 Changed the Conversation

Nvidia’s annual developer conference, GTC 2026, held in San Jose this week, was a watershed moment for the company’s long-term narrative.

At the conference, CEO Jensen Huang told a packed audience that he expects purchase orders between Blackwell and Vera Rubin chips to reach $1 trillion through 2027, doubling an earlier projection of $500 billion through 2026.

One trillion dollars in projected chip orders across two years. And that figure only covers Nvidia’s primary GPU lines.

Bank of America Securities analyst Vivek Arya reaffirmed a bullish outlook on Nvidia following GTC 2026, maintaining a Buy rating with a $300 price forecast. Arya pointed out that Nvidia’s projected $1 trillion-plus in data centre revenue for 2025–2027 excludes contributions from CPUs, storage systems, and newer rack offerings, and that these segments could expand the total opportunity by roughly 50%.

In other words, even the trillion-dollar number undersells the full picture.

Wedbush’s veteran tech analyst Dan Ives hailed Nvidia’s GTC keynote as a major “confidence boost” for investors, saying the company remains “alone at the top of the AI mountain” and is “two to three years ahead of anyone, including Google.”

Platforms like Microsoft and Meta’s $700B data centre expansion continue to funnel enormous capital directly into Nvidia’s pipeline — reinforcing the demand story analysts keep pointing to.

How Nvidia Maintains Its Edge

It’s About the Full Stack, Not Just the Chips

One of the most important insights from the Nvidia vs Micron comparison is that Nvidia competes on a completely different level when it comes to software and ecosystem depth.

Nvidia’s ecosystem and software depth represent the moat that ensures its dominance into the foreseeable future. For cloud hyperscalers and large language model developers, the decision to include Nvidia as part of their infrastructure mix has already been made.

At GTC 2026, Huang unveiled the Vera Rubin platform, a full-stack AI computing system comprising seven chips, five rack-scale systems, and one supercomputer purpose-built for agentic AI workloads.

Nvidia wants to own not just training, but the economics of inference and the infrastructure around agentic AI. The company’s biggest hardware announcements at GTC pushed in exactly that direction.

Huang described Nvidia as “the inference king,” referencing a process of extreme co-design where software and silicon are developed in tandem — something competitors cannot easily replicate.

For a deeper look at how Nvidia’s AI funding and investment ecosystem continues to evolve, the supply chain dynamics between these two companies tell an important part of the story.

Micron’s Strengths Deserve Credit Too

A Compelling Long-Term Bet — Just a Different One

None of this means Micron is a bad investment. Far from it.

Micron generated a record $13.6 billion in revenue during its fiscal 2026 first quarter, up 56% year over year. Revenue from the company’s cloud memory segment doubled to $5.3 billion as AI data centre HBM demand surged. Earnings per share jumped 175% from the year-ago period.

Those are extraordinary results. And Micron’s HBM chips go directly into Nvidia’s flagship Blackwell GPUs — meaning its fortunes are partly tied to Nvidia’s success.

- Micron’s HBM3E products attract strong interest for their superior energy efficiency

- The company raised its server-growth forecast for 2025 to high-teens percentage range

- Analysts at IDC project global AI infrastructure spending to reach $758 billion by 2029

- Micron expects the HBM market to grow at a 40% annual rate through 2028

Micron expects the HBM market to generate $100 billion in revenue after three years, compared to $35 billion this year.

In January 2025, Nvidia confirmed that Micron is a core HBM supplier for its GeForce RTX 50 Blackwell GPUs, signalling deep integration in the AI supply chain.

Still, Micron’s role as a supplier, rather than a platform architect, shapes the ceiling on its valuation. Memory chips face commoditisation pressures in ways that Nvidia’s GPU ecosystem simply does not.

Understanding how AI tools are reshaping enterprise decision-making more broadly is also relevant here. The rise of platforms like IBM SQL Data Insights Pro highlights how enterprises are rethinking AI infrastructure from the software layer up, and why the hardware providers powering that shift carry such weight in the market.

Who Should Investors Actually Watch?

The Nvidia vs Micron comparison ultimately comes down to where in the AI value chain you want exposure.

Micron captures value as AI memory demand grows. That is a real, durable tailwind. But memory markets remain cyclical and prone to supply-driven pricing swings. Micron’s current valuation already reflects much of the near-term optimism baked in.

Nvidia captures value at every layer of the AI stack: chips, networking, software, inference, and agentic systems. Its moat widens every year, and its forward pipeline of $1 trillion in projected orders suggests the runway is still long.

Analysts from Wells Fargo and others maintained overweight ratings with targets well above current levels, some as high as $265 or more, following Nvidia’s GTC announcements, saying the company reinforced its moat in accelerated computing.

Disclaimer: The information in this article is for general informational purposes only and does not constitute financial, investment, or professional advice. The views expressed reflect publicly available analyst commentary and market data at the time of writing. Past performance of any stock or company is not a reliable indicator of future results. Always conduct your own research and consult a licensed financial adviser before making any investment decisions. The author and publisher hold no responsibility for any financial decisions made based on the content of this article.

Frequently Asked Questions

1. Is Nvidia a better investment than Micron right now?

Ans: According to analyst consensus, Nvidia currently offers greater upside potential than Micron. While both companies benefit from the AI infrastructure boom, analysts project roughly 47–49% upside in Nvidia shares versus around 6% for Micron from current price levels. That said, both carry different risk profiles, and investors should consult a financial adviser before making any decisions.

2. Why do analysts prefer Nvidia over Micron in the AI race?

Ans: Analysts favour Nvidia because it owns the full AI computing stack — from GPUs and networking hardware to software and agentic systems. Micron, while a vital memory supplier, plays a component role within that ecosystem. Nvidia’s moat, software depth, and $1 trillion-plus projected order pipeline give it a structural advantage that is difficult for competitors to replicate quickly.

3. Does Micron benefit from Nvidia’s growth?

Ans: Yes, directly. Micron supplies high-bandwidth memory (HBM3E) chips that go into Nvidia’s flagship Blackwell GPUs. As demand for Nvidia’s AI accelerators grows, Micron’s HBM order volumes grow alongside it. Micron confirmed this supply relationship in early 2025, making it one of the key memory partners embedded in Nvidia’s most advanced hardware to date.